Our definition of health is evolving. How did we get here, and where are we going?

What does healthy mean to you?

You likely have an intuitive sense of what health feels like—perhaps a combination of your day-to-day well-being compared to when you're sick, the public health messages you've absorbed, and interactions you've had over the years with the healthcare system.

Your personal definition of health is probably shaped by family beliefs, education, societal norms, and advice from healthcare providers. But defining health in a universally meaningful way remains challenging precisely because it is inherently personal and context-dependent.

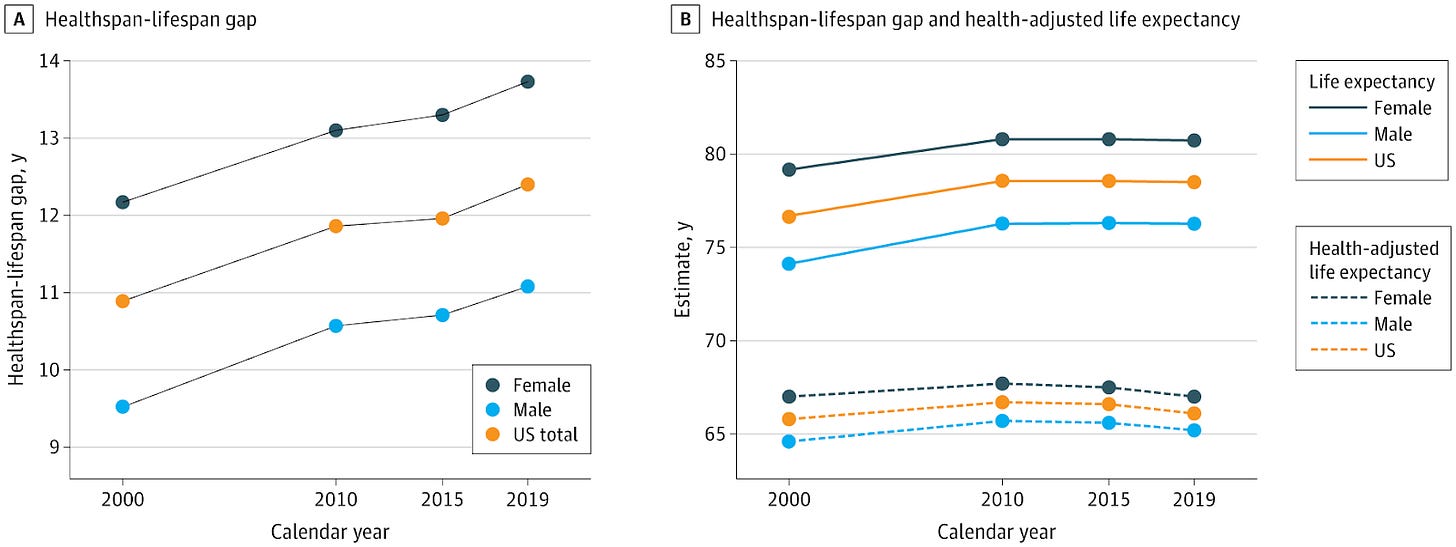

Society’s collective definition of health has evolved significantly over time, directly influencing how resources are allocated toward maintaining population-wide wellbeing. Historically, modern medicine's successes have shaped a health system largely centered on the absence of disease. Though we've largely succeeded in eliminating infectious disease and have strong mechanisms of containing emerging ones (though still imperfect, e.g. COVID 19), we are in the midst of a growing chronic disease crisis and have the largest healthspan lifespan gap in the world.

Garmany & Terzic 2024, JAMA Network Open

This article explores how we arrived at this point: How has the U.S. historically defined health? Why has our definition led us to our current challenges? And importantly, what shifts are necessary to build a future where health means more than merely the absence of disease?

The Biomedical Model: Health as the Absence of Disease

Scientific breakthroughs in the late 1800s and early 1900s dramatically transformed medicine and public health. Discovering specific causes of diseases—such as bacteria, viruses, and nutritional deficiencies—enabled precise, targeted interventions like vaccines and antibiotics. Consequently, medicine's primary goal became the elimination of these disease agents, with health defined as the default state achieved once diseases were removed.

The period saw numerous Nobel Prizes awarded rapidly for breakthroughs in infectious diseases: 1901 for diphtheria, 1902 and 1907 for malaria, 1905 for tuberculosis, and 1907 for trypanosomiasis (“disease causing protozoa”).

Soon after, surgical innovations gained recognition, notably in 1909 for thyroid gland surgery and in 1912 for advancements in blood vessel suturing and organ transplantation techniques.

Thus, modern medicine established a clear template: identify a disease, then develop acute treatments like antibiotics or surgical interventions. Defining health as "the absence of disease or infirmity" logically followed this successful approach.

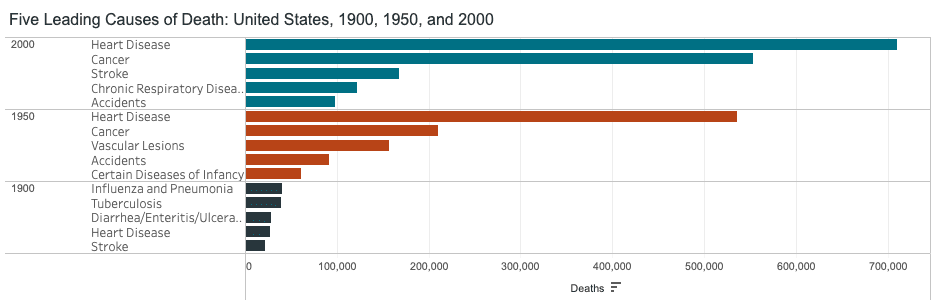

This biomedical model achieved remarkable results, exemplified by a dramatic rise in life expectancy from 47 years in 1900 to 68 years by 1950.

Treating Infectious Disease Became the Model for the Modern Healthcare System

Infectious disease eradication significantly influenced biomedical innovation and the standardization of healthcare practices. A landmark event was the publication of the first-ever randomized controlled trial (RCT) in 1948, which evaluated the effectiveness of the antibiotic streptomycin in treating tuberculosis. Previous research had demonstrated streptomycin’s efficacy in a dish (in vitro) and in guinea pigs. Preliminary non-controlled clinical trials were “encouraging but nonconclusive.”

The trial was able to demonstrate a statistically significant effect of streptomycin at preventing death and leading to significant improvements in young patients with acute progressive tuberculosis, clearly linking the presence of this drug to improvements in symptoms.

The RCT set the new standard for how to approach health and disease. This revolutionized healthcare and helped standardized the provision of safe and effective therapies to the public.

Building upon this successful paradigm, the medical field focused their efforts on identifying and classifying disease and developing a treatment to eradicate this cause. However, as treating infectious diseases and surgical techniques improved, chronic diseases became the major killers.

Mid 20th Century: Chronic Disease and the Rise of "Risk Factors"

Already by 1950 chronic diseases including heart disease, cancer, and stroke caused more than half of all deaths in the US.

This prompted multiple national efforts to identify factors related to chronic diseases.

In 1948, the Framingham Heart Study launched as one of the first major endeavors to “identify the common factors or characteristics that contribute to cardiovascular disease.” In the initial 6 year follow up study published in 1961, the concept of “risk factors” for heart disease was introduced, highlighting modifiable behaviors and objective metrics such as smoking, high blood pressure, obesity, high cholesterol, and physical inactivity.

In 1956, the National Health Survey Act authorized ongoing statistical surveys to measure illness and disability across the United States. This initiative further solidified the disease-centric approach, embedding metrics like blood pressure, lipid profiles, hemoglobin A1C, glucose levels, body weight, and body mass index as standards for determining health status. The goal was to normalize these metrics with lifestyle and therapeutic interventions.

We began to realize that using the highly successful model of identifying and treating infectious diseases had shortcomings when it came to mitigating chronic disease—obesity rates rose significantly, and the proportion of US adults living with chronic diseases continued to rise.

Towards the end of the 1900s and early 2000s, there were key signs that demonstrate there was a need to shift the view of health from one that simply avoids disease to one that promotes promotes health status (real-time function and quality of life) and longevity.

Measuring Population Health: From Disease Indicators to Functional Capacity

Next-generation population-wide initiatives in the United States began shifting focus toward promoting overall health and longevity. The US Surgeon General’s “Healthy People 1990” released in 1980, established measurable objectives for improving health and well-being nationwide. Initially focused on mortality reduction, subsequent iterations emphasized longevity and quality of life.

Healthy People 2000 explicitly aimed to "increase the span of healthy life," while Healthy People 2010 emphasized improving overall quality of life. The latest edition, Healthy People 2030, further emphasizes thriving, health maximization, and prevention of disease and premature death. Its primary goal is to "attain healthy, thriving lives and well-being free of preventable disease, disability, injury, and premature death."

In theory, this is fantastic, given the rising healthspan-lifespan gap. In practice, how will this happen?

To truly drive a society geared toward health, we must measure health not merely by the absence of disease, but by an individual's functional capacity. Healthspan refers to the number of quality years a person lives in full functional health relative to their age and life stage—able to perform essential physical, cognitive, and daily life activities with ease, and maintain resilience to life’s stressors. Achieving this requires scaling research-grade assessment methods to the broader population.

Early indications of this shift appear in the latest updates of the Framingham Heart Study, which now includes comprehensive evaluations like cardiopulmonary exercise testing (CPET) to measure VO2max, grip strength, high-resolution bone density tests, omics-based blood metrics, and functional MRI scans of the brain.

Interestingly, CPET was added only in 2015. In 2016, the American Heart Association declared that fitness should be a clinical vital sign. Cardiovascular fitness is one of the most powerful prognostic markers for mortality.

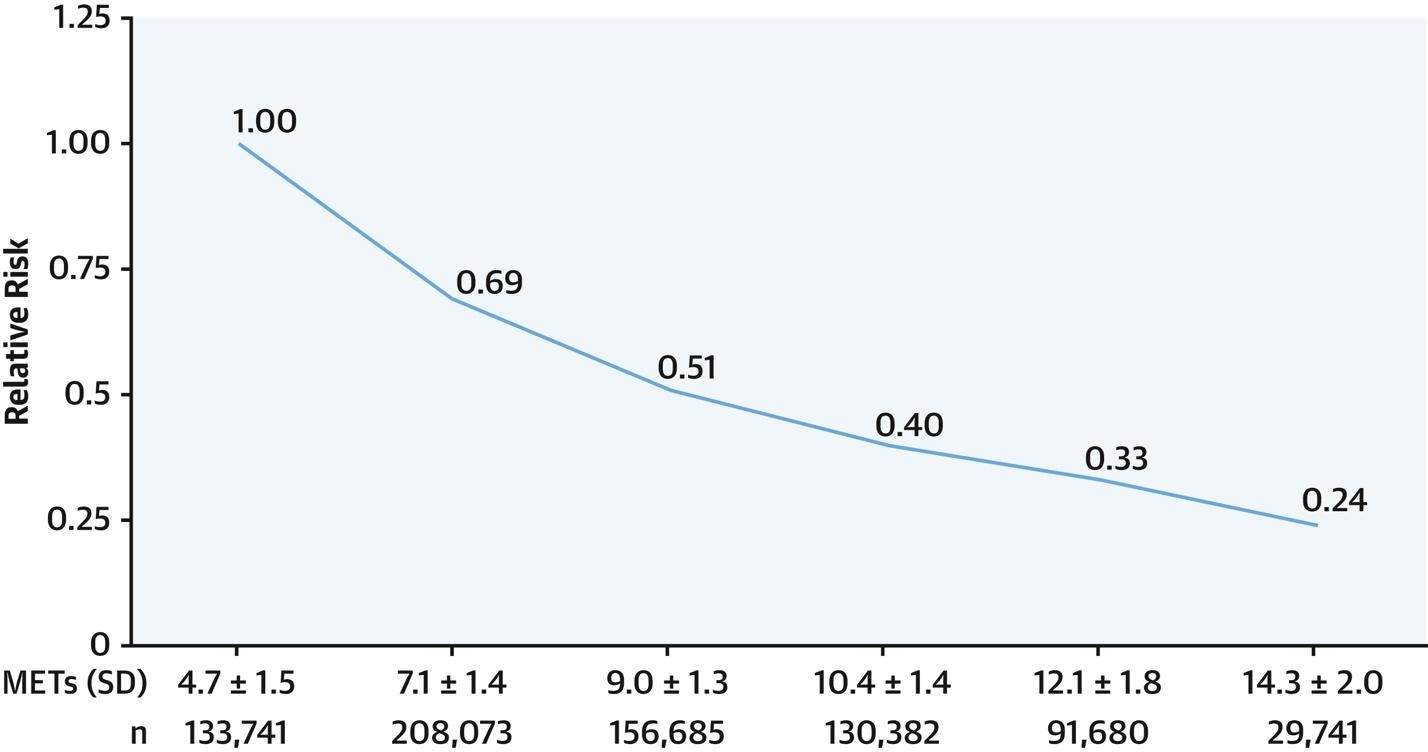

There is a very clear, dose-response reduction in relative risk of death associated with cardiorespiratory fitness, and no upper bound of the protective effects of fitness (Kokkinos et al 2022). This key metric displays health as a spectrum where lower is worse and higher is better.

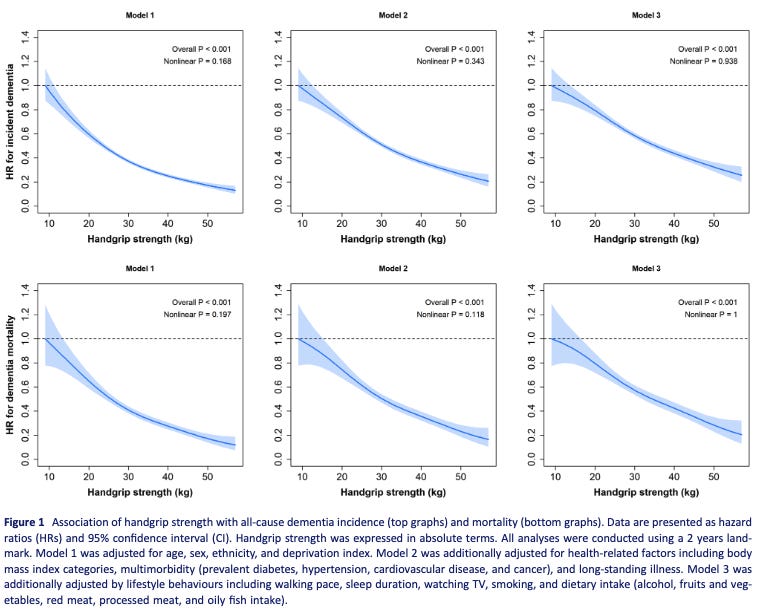

Grip strength also has a very clear association where lower is worse and higher is better, at least for all-cause dementia incidence of dementia associated mortality.

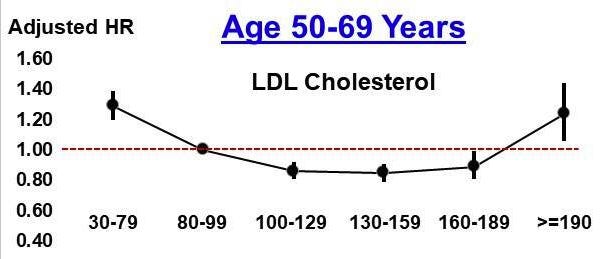

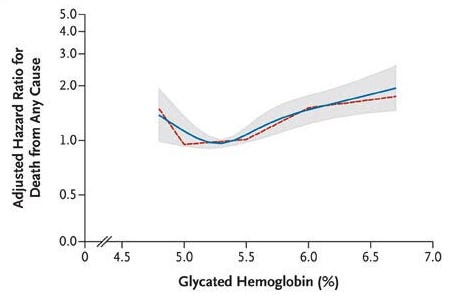

Compare this to LDL and A1C, where lower/higher is not always better. (deeper dive into some of the world’s hottest biomarkers coming in future posts)

From Kip et al 2024 (LDL) and Selvin et al 2010 (A1C) demonstrate J-shaped curves related to blood levels and hazard ratio of all-cause mortality. Interestingly, having an A1C <5.0% was associated with a statistically significant increased hazard ratio for all-cause mortality (HR = 1.43 (1.17-1.74)) compared to 5.0-5.5%.

Future health metrics must therefore focus on assessing individuals' functional capacities—such as intrinsic or vitality capacity—to provide clearer, more actionable measures of health, quality of life, and longevity.

Rethinking Health: Critiques and Emerging Frameworks

While population-level lab values have led to significant advances in mortality, there appears to be a relative plateau in healthspan.

Additionally, when health is defined on a spectrum, recommendations are clear and actionable at any point—one can always improve their health status and decrease mortality risk, rather than wait in the “normal zone” until signs of disease are present.

Now say these new metrics of health are adopted: how might we use this information practically?

Top down recommendations from healthcare providers (clinicians, dieticians, health coaches)

Peers or digital tools to encourage healthy behaviors

Changing the Healthcare Provider Relationship

Changing healthcare recommendations takes a long time. Clinicians in training have already learned a gargantuan set of rules by the time they are practicing in the real world. But if novel metrics of health become commonplace, clinicians may be able to focus on the 3-5 metrics that truly matter, making personalized interventions much more straightforward, rather than complex interpretation of many lab values that show up in the “normal” range.

This simplified view focusing on the few proven metrics that actually matter (e.g. VO2max, body composition, grip strength) that determine both health status and are associated with lower rates of all-cause mortality, will serve as the baseline for health exams. While disease screening labs should be ordered in the presence of symptoms or at specified intervals of age, the relationship between the healthcare provider and the client may largely be focused on lifestyle and behaviors to optimize these key measures of health.

Achieving Health Outside of the Traditional Medical System

With the rise of wearable health monitoring, direct to consumer lab testing, the growing model of gym/wellness centers as clinic, and recent commercial traction of digital health companies (e.g. Hinge Health’s IPO, and Omada Health’s S1 filing), how people access their health data and healthcare is changing rapidly. As “behavior change” is often hailed as the necessary target to improve chronic disease, technology offers a unique ability to modify healthy behavior.

By the way, this topic deserves a post of its own.

Recently when in LA, a friend (Zach Poll) and I discussed how to techify health behaviors. He referred me to a study that showed that basketball teams 1 point behind at half time were more likely to win than teams 1 point ahead at half time. This study points to the “comeback effect,” where there may be a psychological advantage or improved motivation related to being slightly behind a competitor.

What if you were matched with an individual just ahead (or tied) with you on a certain health metric, and you agreed to participate in a protocol to improve your health scores?

A tool like stadium science provides a compelling platform for competitive sleeping. Leaderboards in gyms through apps like Wodify allow one to compare not only to other gym-goers, but to oneself over time.

In a proactive health-promotion focused system, the traditional clinic will likely not be the space in which the majority receives primary care.

Conclusions

The evolution of health has been shaped by each era’s specific needs, initially driven by the remarkable success of the biomedical model in combating infectious diseases through targeted identification and eradication. We’ve achieved enormous strides over the last century and this is worth celebrating. But now we need to pivot, and quickly. Chronic diseases are complex and multifactorial, highlighting the limitations of viewing health merely as the absence of disease. Despite changing attitudes, the prevailing disease-centric biomarkers still primarily detect illness after it manifests.

To achieve genuine improvements in population health, we must shift our perception and adopt scalable measures focused on functional health. Disease screening will always play a role, but future health assessments must prioritize evaluating functional capacities and their scalable proxies.

Whether optimal health is achieved within clinics, gyms, or through digital technologies in everyday environments, focusing on the right metrics is essential for advancing individual and public health.

Written by

Brooks Leitner, MD PhD

Reading Time

18min